SEMICOLONY

MA Computational Arts thesis project

Goldsmiths, University of London

2015

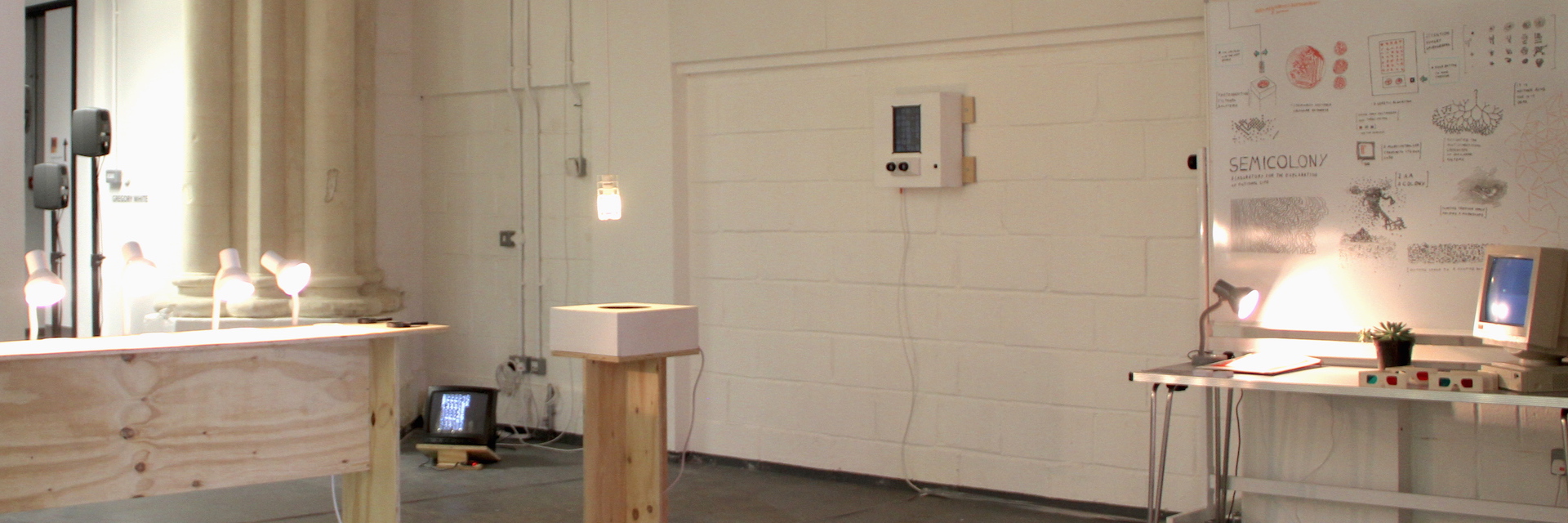

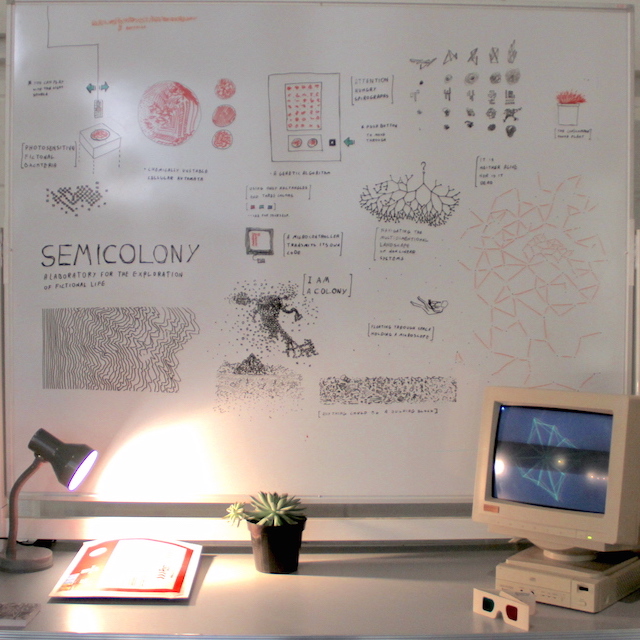

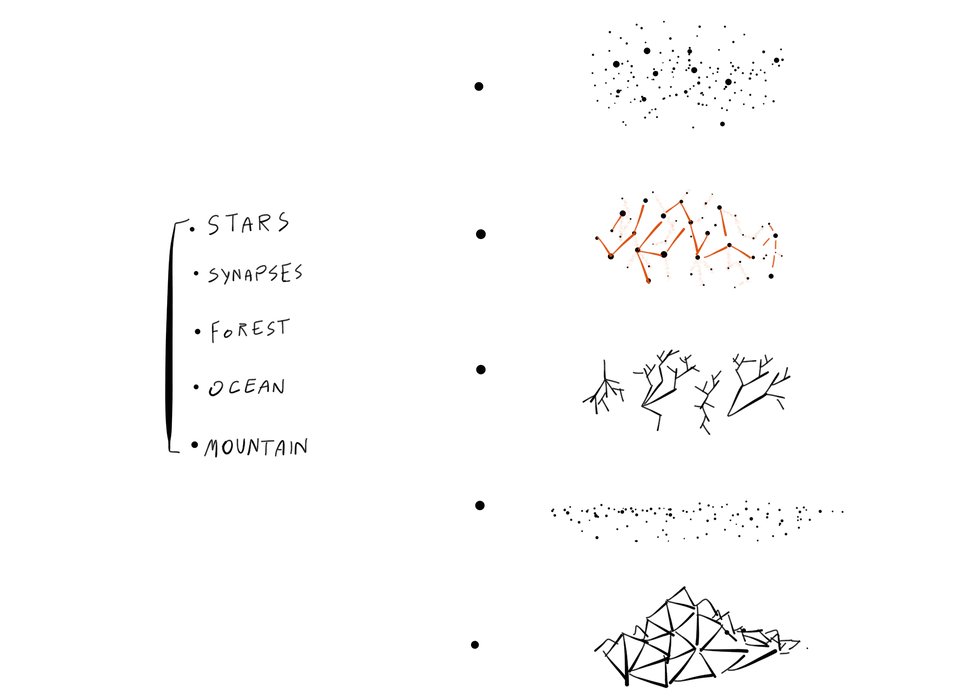

Semicolony is a series of software experiments in artificial life, conducted over the course of one year. Each experiment attempts to recreate a particular phenomenon associated with living systems as an abstract process, embedded in a virtual environment. The project adopts a particular aesthetic that is neither biomorphic, nor strictly digital. It deliberately blurs this line in order to invoke a sense of exploration of the vast space of phenomena between living and non-living realms; Ultimately raising questions on what exactly constitutes a living thing.

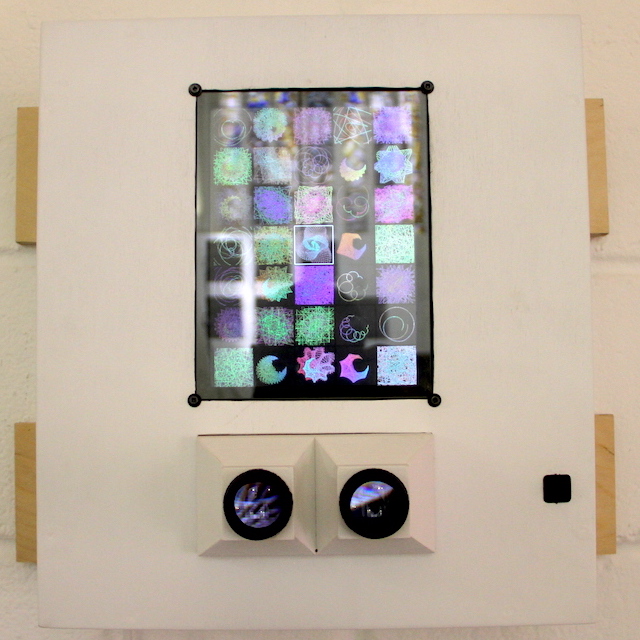

The installation is an interactive study of nonlinear systems, evolution, emergence and morphogenesis. It takes the form of a fictional laboratory and offers instruments for observing, exploring and interacting with fictional life forms. The lab adopts design queues from iconic 80’s consumer electronics, inviting visitors into a familiar, yet somehow estranged experience. The organisms featured in SEMICOLONY not only allow a glimpse into their own unlikely existence - some of them actually rely on our attention for survival.

Evolutionary Algorithms

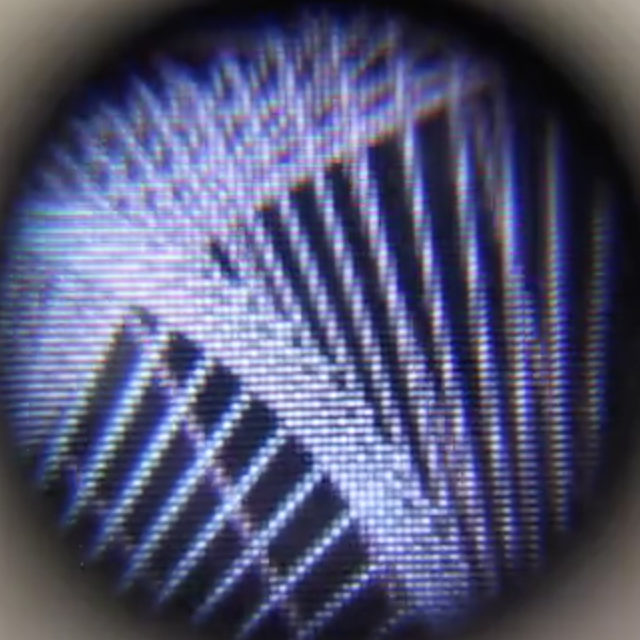

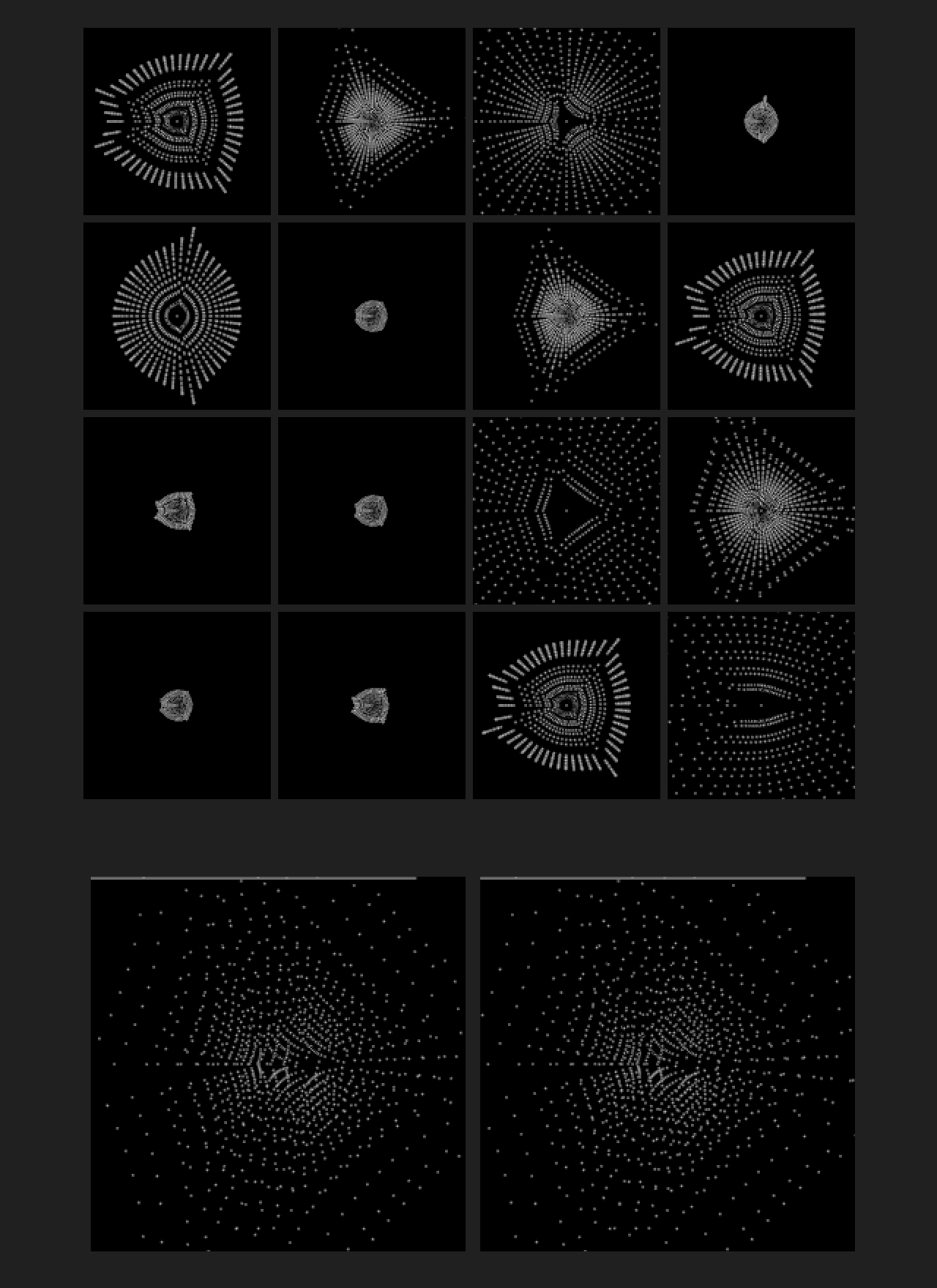

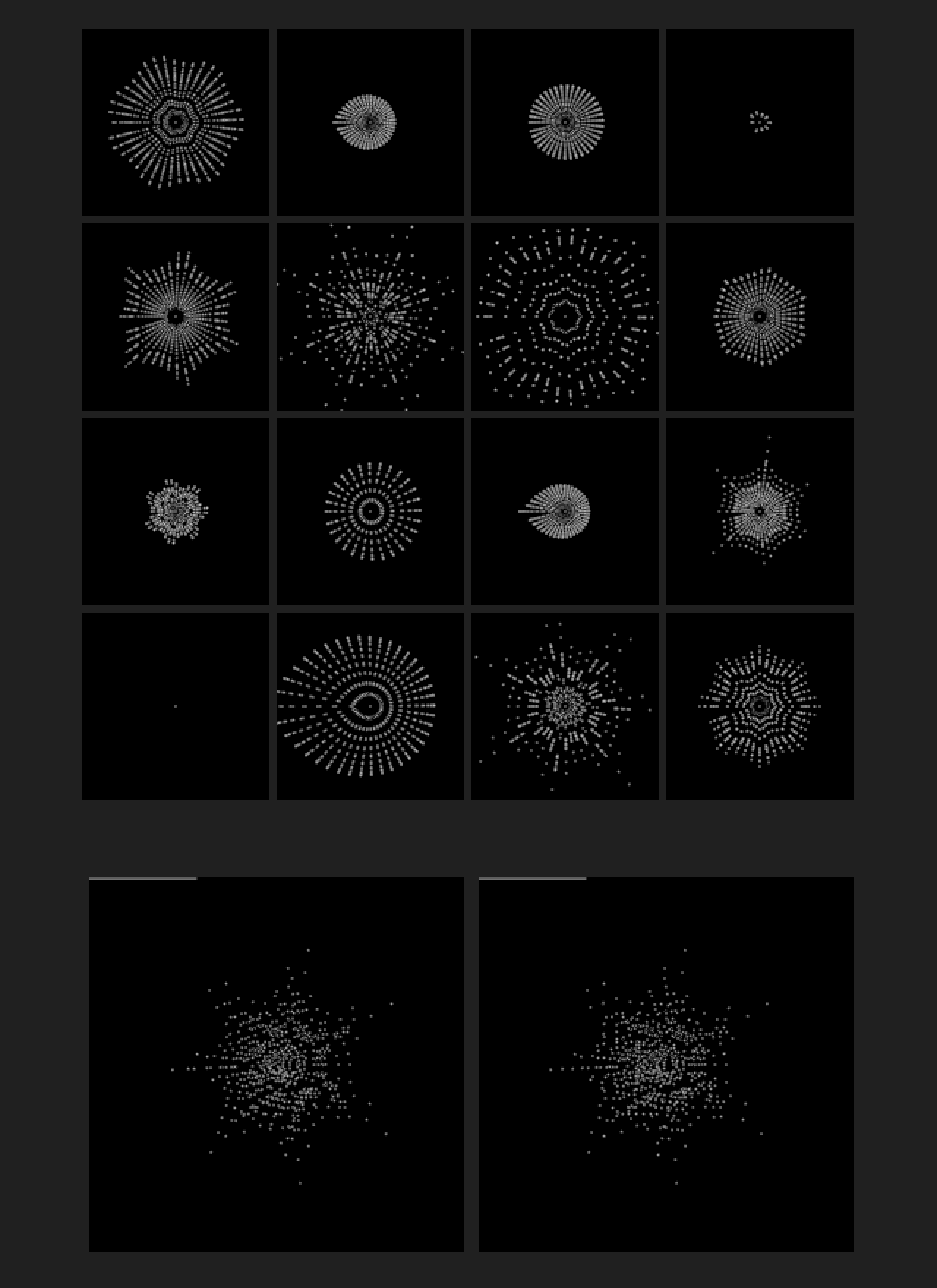

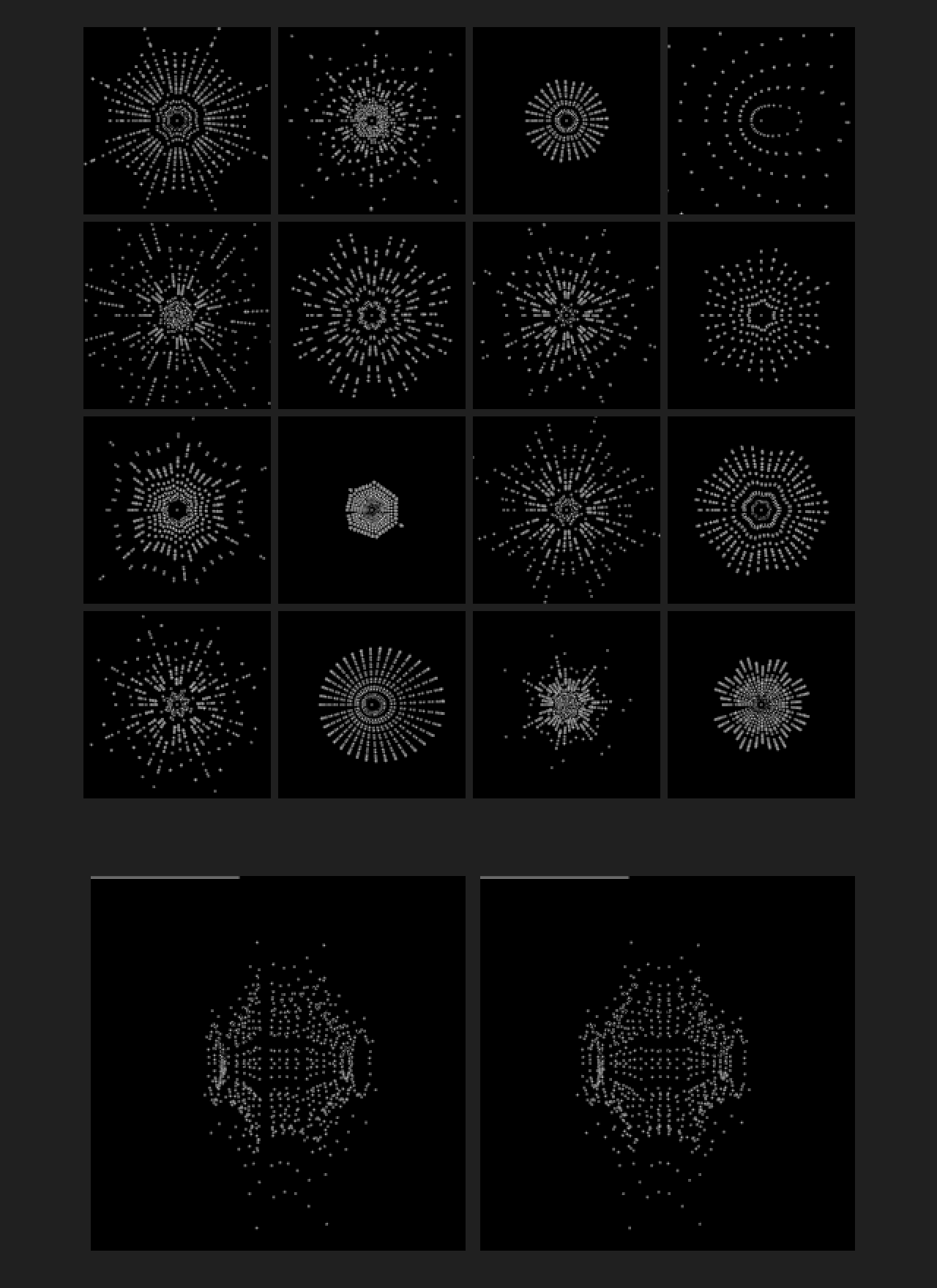

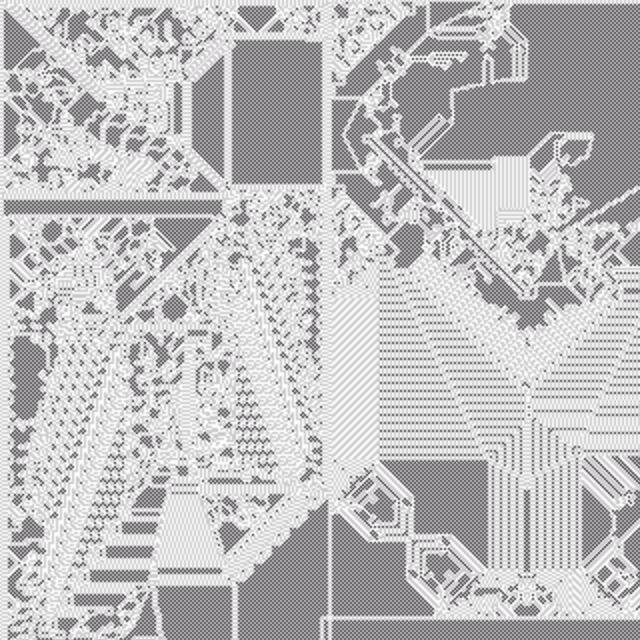

Revolving is an interactive genetic experiment that allows an observer to direct the evolution of dynamic graphical elements towards aesthetically favourable configurations over time. This is an open-ended process; it leans heavily on intuition and taste. The experiment examines how simple modes of interaction may be used to drive complex processes over sustained periods of time.

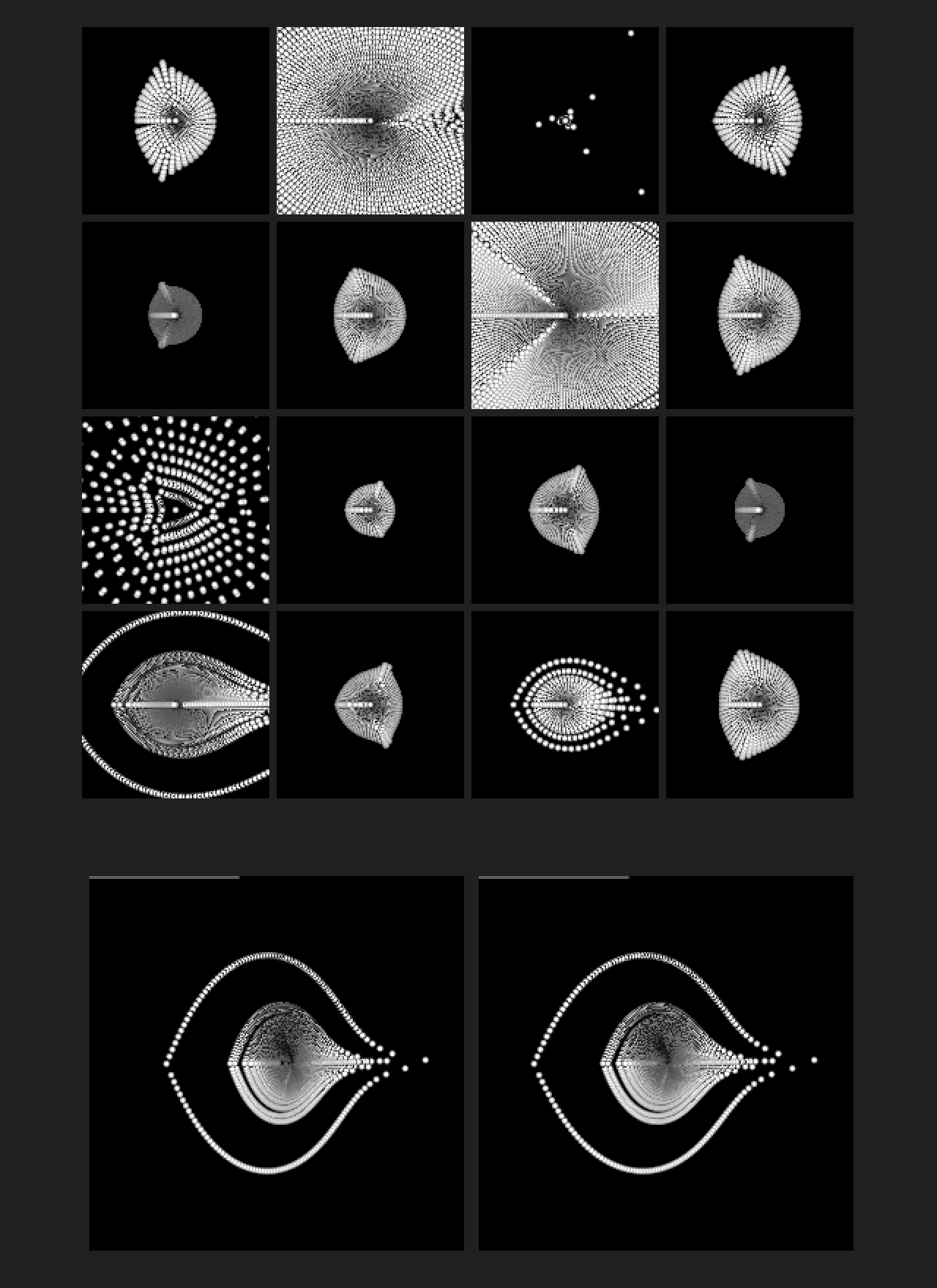

Early sketches and variations

The graphical elements could literally be any structure whose properties are numerically defined. Initial experiments included colour sequences ,poly-lines, spirographs (epitrochoids), superformulea, 3D rigid body simulations and later on - cellular automata. First, A population of randomly generated configurations is produced. It is then displayed with a user interface that allows selection of any number of individuals. Several such interfaces were considered, including eye tracking, mouse selection and single click timed iterations. Third, a new generation of individuals is created by combining the numerical properties of only those selected. The new generation is displayed and the process repeats.

Interactive evolutionary algorithms are inherently slow, compared to automatic fitness selection. This makes them poor candidates for handling problems who’s solutions are unknown but may be well defined. However, In the context of a computational arts practice, an interactive approach may be used as a means for exploring configuration spaces that are neither known, nor well defined. Mutation and cross breeding are significantly more dramatic, compared to biological evolution or automated computational approaches. This is necessary in order to allow the human operator to notice changes between subsequent generations. For the same reason, the number of members in each generation is also dramatically limited. Effective observation and selection becomes exceedingly difficult as their number increases beyond a few dozen. These limitations notwithstanding, the experiment demonstrates how a simple and purely intuitive selection process is capable of driving the evolution of dynamic visual imagery though a high dimensional space of possible configurations.

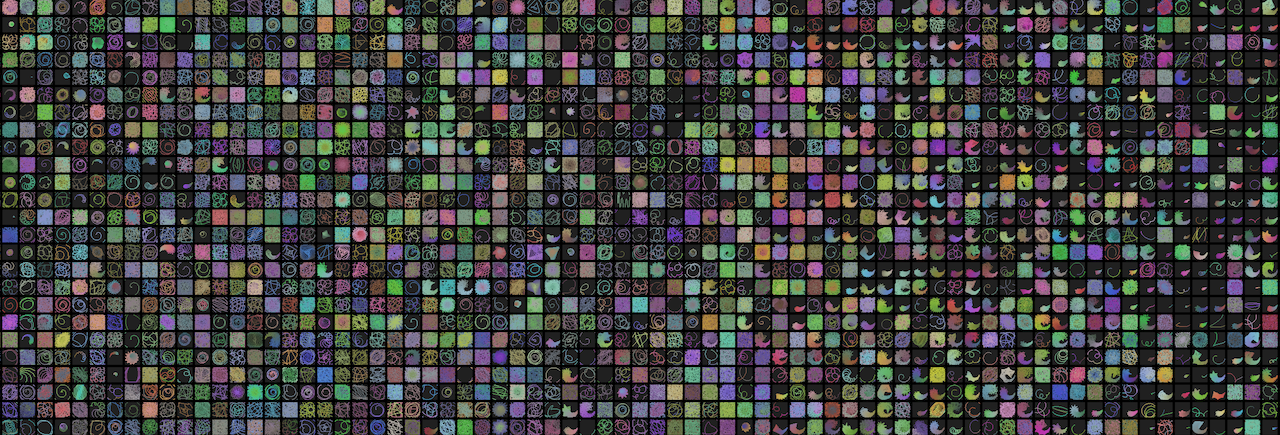

For the Final degree show, this experiment was implemented as a standalone custom embedded computer featuring a stereoscopic display, and a sensing mechanism for measuring audience engagement. Over the course of a 4 day exhibition, the short genealogy shown below featured 70 generations. A simple visual examination shows the diversification caused by audience surges in opening and closing nights.

70 generations over a four day exhibition. Evolution is driven by visitor engagement

Swarm Dynamics

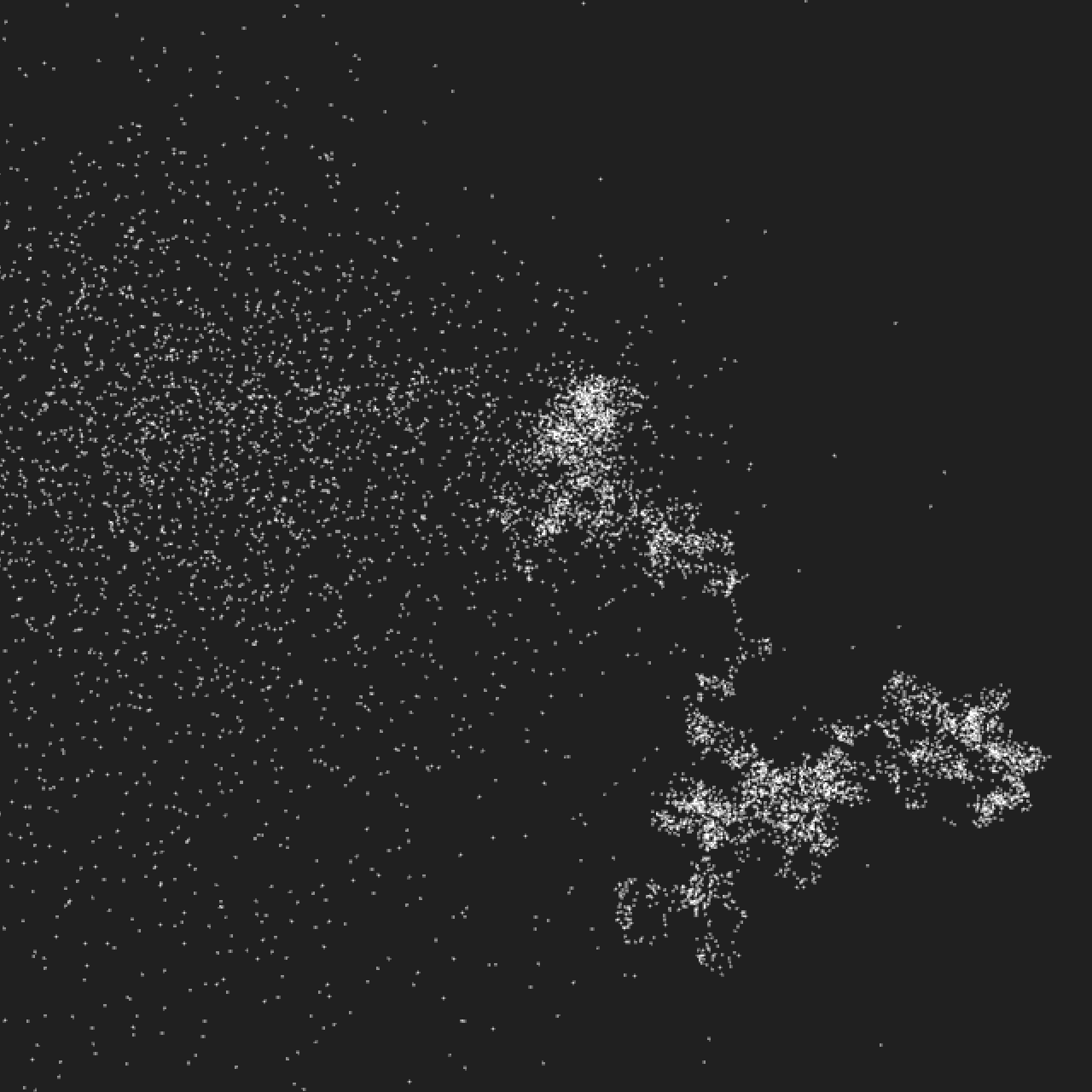

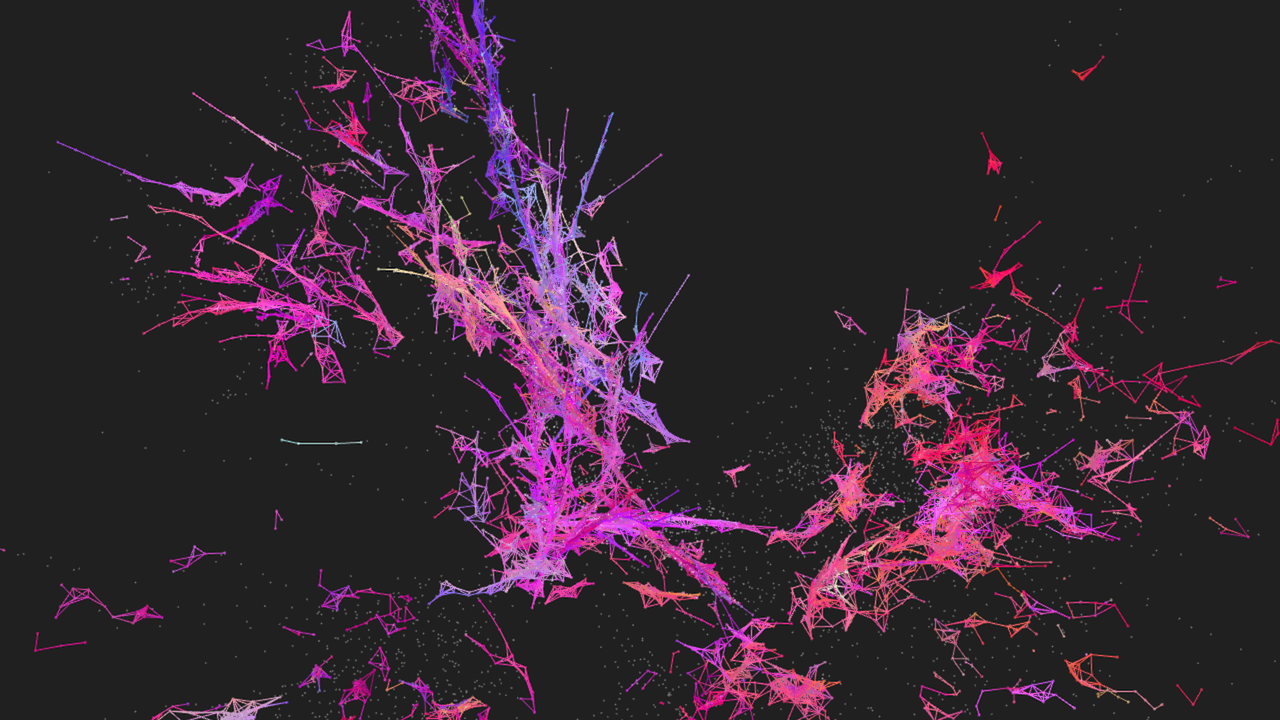

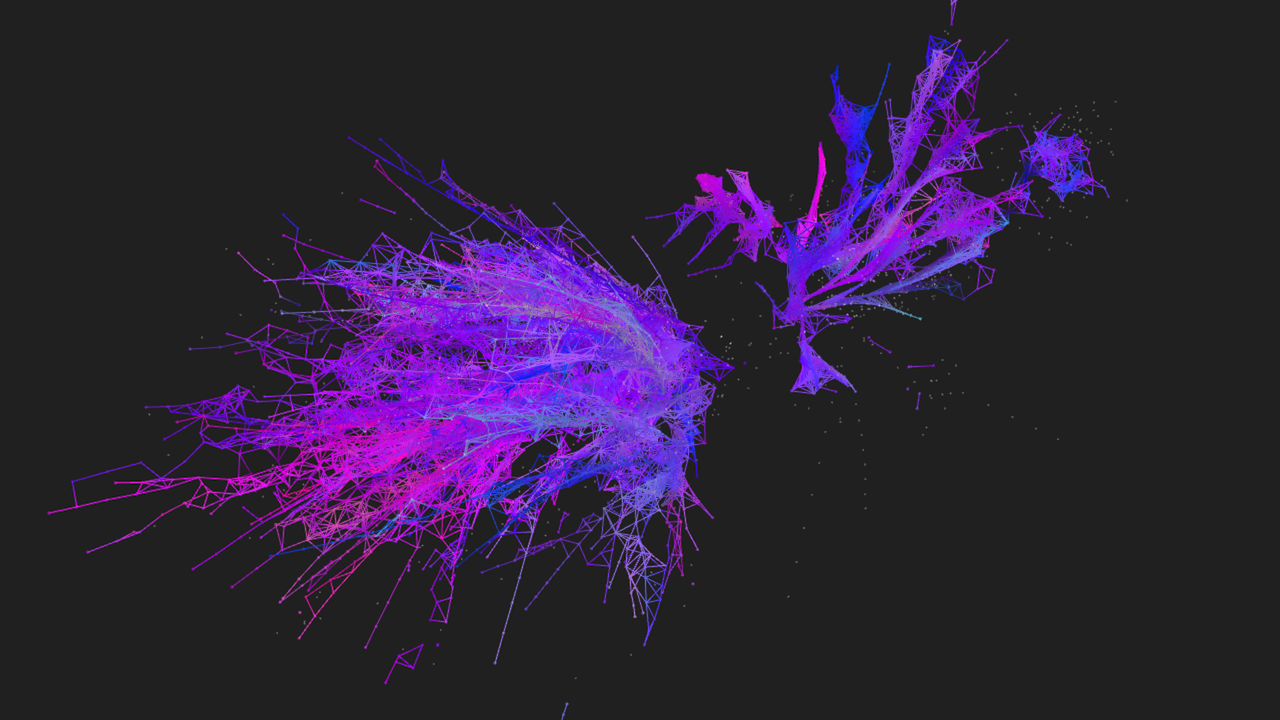

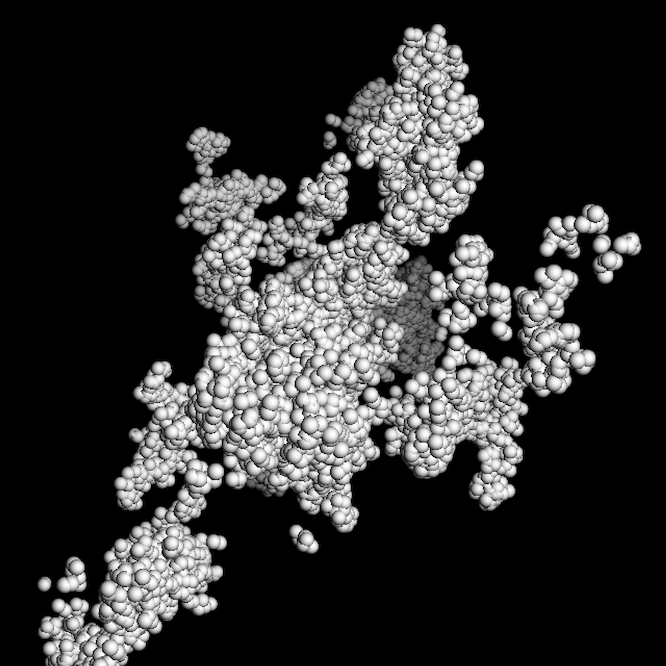

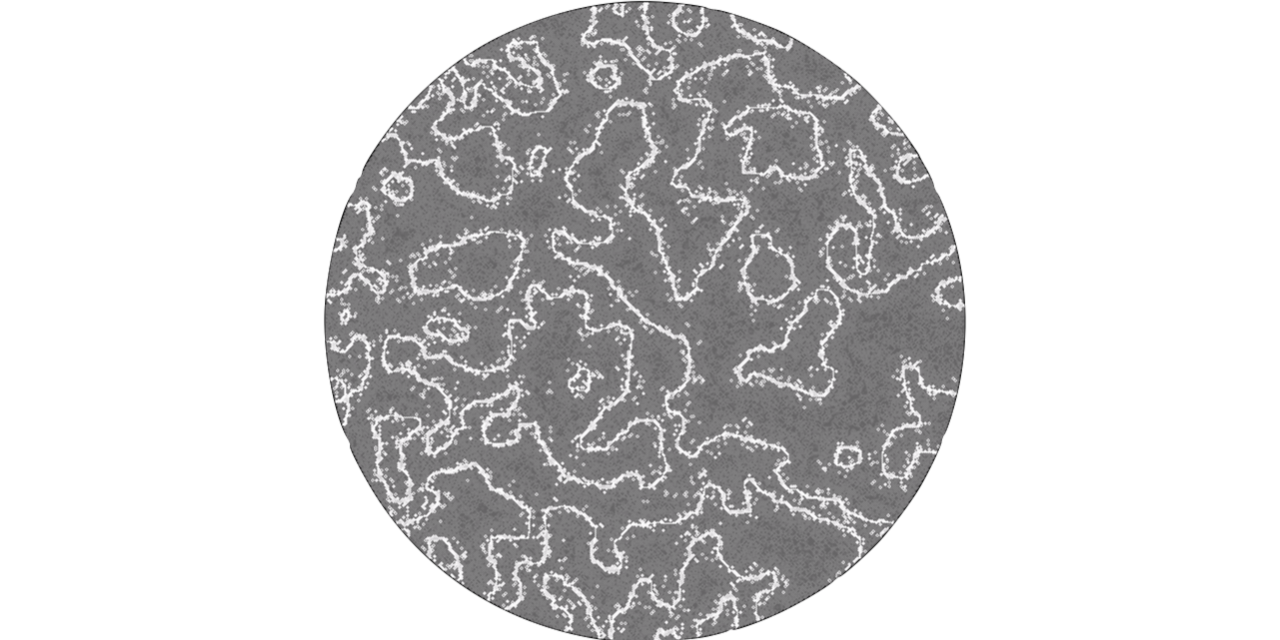

Individual life form is a study of morphogenesis through group dynamics. The experiment starts with a single point (a vertex) in virtual 3D space. It has a finite amount of “energy”, which it can use to autonomously move around or to spawn a new vertex. Its offspring will carry slightly mutated attributes, such as colour and various heuristics. Once all “energy" is depleted - the vertex will essentially “die”, revealing its skeletal structure. In order to avoid rapid exponential growth, the lifespan, energy, range of motion and rate of reproduction must be all carefully balanced, resulting in some visual resemblance to plant morphology.

The experiment was designed with both technical and conceptual goals in mind: Written in c++/openframeworks, it combines and alters a number of computational models into a single codebase. Vertex motion is based on the boids model [Reynolds 1987], combined with nearest neighbour searching for optimal framerate. Conceptually, it is a proof-of-concept for a hybrid approach, demonstrating how swarming, mutation and cell division behaviours may be combined to produce biomorphic behaviour without modelling any particular physical phenomenon.

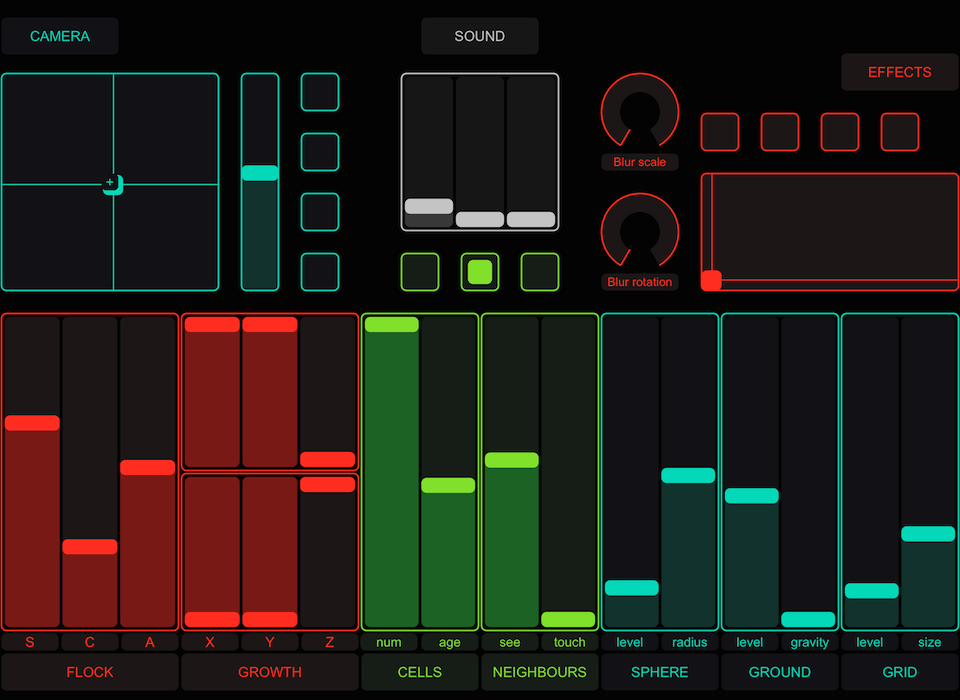

Colony Type-B is an audio-visual live performance tool featuring a population of points that can move, interact, spawn, die and react to live audio. The software was used to produce sound-reactive visuals for The infinite bridge, a multi-disciplinary live performance project, which premiered at the Royal college of Music in May 2015.

Harnessing complex dynamical systems for live performance holds great potential for diversifying the visual outcome. However, this also poses a challenge: due to the inherently unpredictable nature of these systems they are notoriously hard to control. In fact, much of the work in creating this type of software is not in programming the behaviour itself, but in tweaking its parameters so as to maximise its autonomy while retaining sufficient command over it. Constraining the system’s parameters ensures that it behaves consistently and reliably during performance - but at the cost of making it monotonous and predictable. On the other hand, allowing the system too much freedom may result in a significantly wider expressive range - but at the cost of becoming unpredictable and unreliable. It is not unlikely for a sufficiently sophisticated swarm implementation to decide to leave the stage in the middle of a show.

Type-B address this narrow window by maintaining a constant tension between real-time, centralised commands from a human operator, and distributed forces that drive the swarm’s intricate behaviour. This tension between global and local forces enables different forces and constraints to be exerted and relaxed in real-time. This interactive approach attempts to strike a careful balance between usability, and expressive range, resulting in a relatively manageable tool, capable of expressing an unusually wide range of spacial network topologies.

In addition, each member of the swarm is allowed to “listen” to a particular frequency range in an incoming audio stream. When certain frequencies are more present, their corresponding members absorb more “energy” and thus gain increased freedom of motion and an ability to spawn new members (which in turn subscribe to a similar frequency range). But when a frequency is not present, its subscribing members would quickly die out. The sound input thus becomes like a “force of nature” capable of regulating population dynamics, allowing the swarm to be sound reactive by virtue of natural selection.

Cellular Automata

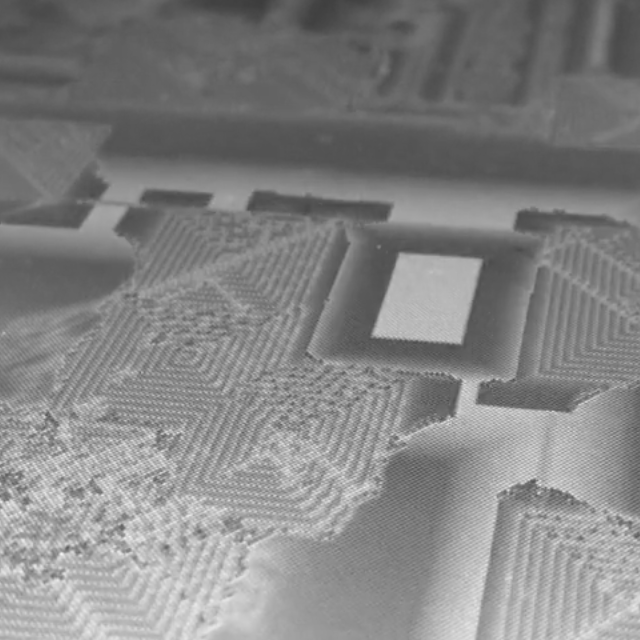

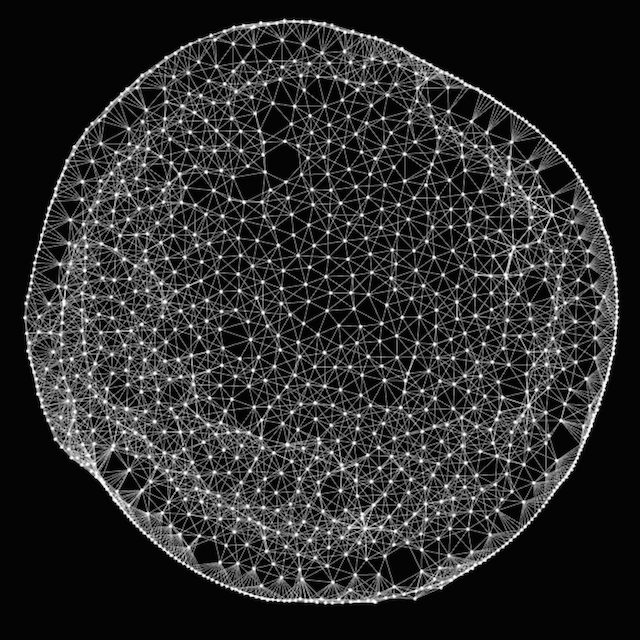

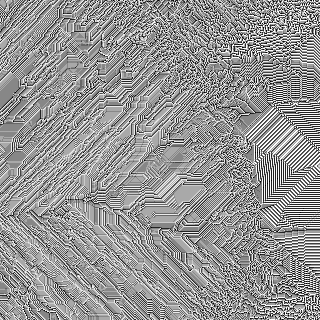

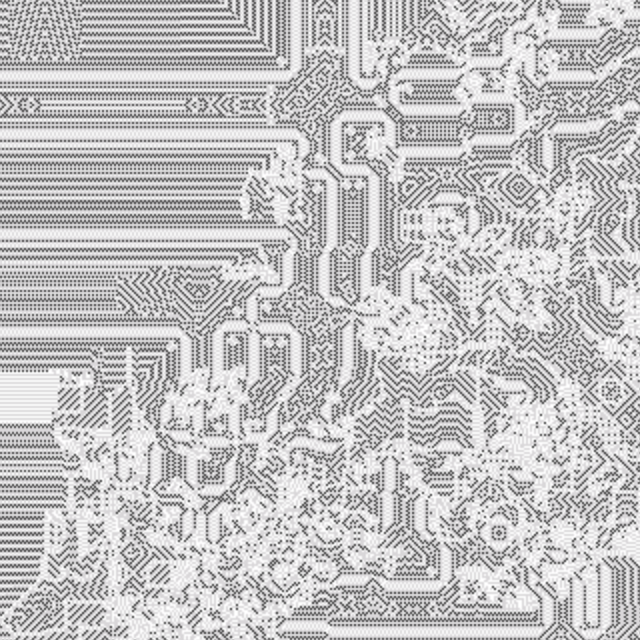

Colony Type-C started as an experiment to develop a hardware accelerated implementation of The Abelian Sandpile Cellular Automata model. According to the model, each pixel (fragment) on screen is likened to a pile in which grains of sand are placed using the mouse. When a pile reached four grains - it would “collapse” and disperse its grains to its four adjacent neighbours. Once the model was implemented, the following modifications were conceived:

1. Continuous state: Instead of using discrete values to describe the state of each cell (originally between 0 and 4), the model was converted to continuous 32bit float state. This, of course, turned the sand metaphor into something far less tangible. The new values were clamped to normalised (0. - 1.0) numbers, however, cells need not necessarily reach 1.0 in order to collapse. Continuous state Cellular automata are less commonly known than discrete state models, however, this class of algorithms is capable of expressing a much wider range of phenomena since there are profusely more variations of continuous behaviours than discrete.

2. Conservation of matter is ignored: the original sandpile algorithm dictates that when a cell “collapses” - it evenly distributes its contents, so that the total number of grains persist. In the modified version - the contents of a collapsing cell may exceed its maximal capacity, so that its each of its four neighbours would each absorb a quantity larger (or smaller) than 25%. The amount gained by each neighbour is defined uniformly across all cells as a variable ranging between 0 .0 and 1.0. Relinquishing the physical metaphor was, in retrospect, perhaps a pivotal decision, as it later became the methodological backbone of my PhD research.

3. Live coded implementation: The program and its parameters can be modified in real-time. Aside from the dramatic speed increase of hardware accelerated implementations, A GLSL driven Cellular automata program can be modified in realtime. This “live coded” approach enables a much more exploratory workflow. Without the need to re-compile the program for each change makes it easy to discover and collect new variations.

The above modifications had effectively resulted in a new computational model capable of generating a surprisingly diverse range of emergent, higher order structures. Surprisingly, these newly discovered formations bare almost no resemblance to the sandpile algorithm. For the final exhibition, a number of variations were 3D printed towards forming a collection of petri dishes. A custom built light-sensitive embedded computer was also constructed, allowing playful and intuitive interaction using a light source.